Imagine you are a Monitoring, Evaluation and Learning (MEL) leader in an international NGO. You’re on the phone with a colleague from the programme team in your organisation:

Colleague: Donor X is interested in livelihood interventions in country Y. Could you have a look at the proposal draft and include some evidence from past programmes to support the interventions we’ve put in the draft?

You: Shouldn’t we look at the evidence before deciding which interventions to put in?

Colleague: Ideally yes, but the proposal is due tomorrow, so we need to keep it simple. We’ve put in the same interventions as the programme we’re about to finish in country Z.

You: Unfortunately, we didn’t get much meaningful data from that project, so we don’t really know whether what we did really helped. And can we really assume that folks in country Y will need the same as in country Z?

Colleague: I hear you. But this is what we’ve done in that sector, so this is what we can pitch on such short notice. Oh, and can you also put a MEL plan and budget together? The donor is most interested in reach, so let’s just put the bare minimum, so we can use most of the resources to maximise reach. Thanks for your help!

Sounds familiar? Variations of that discussion are the norm rather than the exception. You can also probably imagine the kind of discussions that are happening on the funding side to produce the lack of incentives to learn. Most of the problems that the philanthropic and nonprofit sectors are trying to solve are complex, and most of the solutions that first come to mind have been ineffective as many systematic reviews have shown. Yet, organisations – whether funders or nonprofits – are not learning enough.

It doesn’t have to be this way.

We have worked within mission-driven organisations – both funders and nonprofits – as either MEL staff or as advisers and, here, we draw on this experience to explore how we can build learning cultures. By learning cultures, we mean cultures that value and mainstream reflection and learning from data and act on it to adapt the organisation. Cultures that see MEL as everyone’s responsibility and thereby value open discussion on progress and challenges as they emerge from the data. We look at what a leader or staff member interested in MEL (whether or not they are directly responsible for MEL) can do to help more individuals, or whole parts of the organisation, to be better at learning. And we think these lessons apply widely across organisations, from funders to implementing nonprofits.

Applying a behaviour change lens to the problem

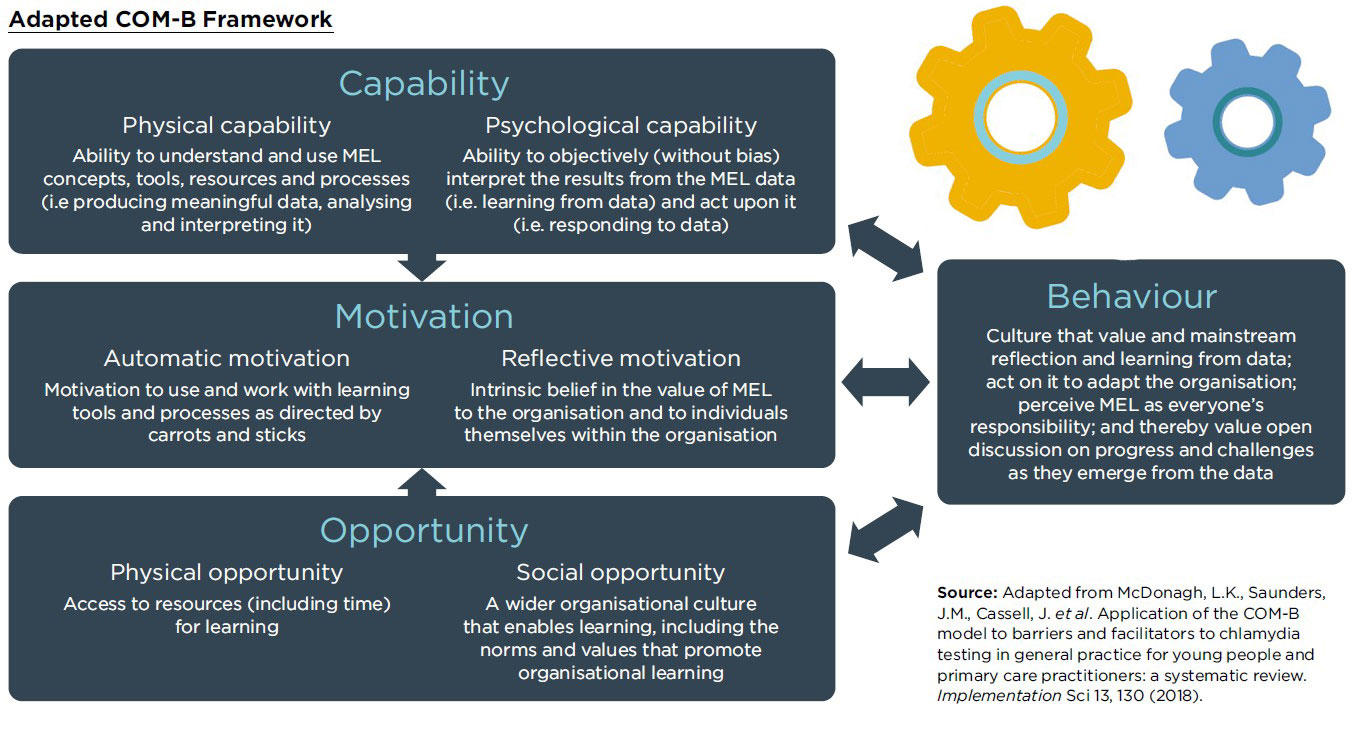

At its core, getting an individual or an organisation to be better at learning is a behaviour change challenge. As such, we look at it through the lens of a well-known behavioural change framework, the COM-B model, which argues that behaviour is influenced by: Capability, Opportunity and Motivation:

In the sections that follow, we reflect on how each of the core elements of the COM-B framework apply to the context of organisational learning.

Strengthening capability for learning

The COM-B model makes the distinction between physical capability and psychological capability. In applying this to the context of organisational learning, we define the first as the ability to understand and use MEL concepts, resources and processes – that is, producing meaningful data, analysing and interpreting it, and the second as the ability to objectively interpret the results from the MEL data and act upon them.

In terms of physical capability, typical problems we encountered include lack of familiarity with core MEL concepts (for example, attribution and statistical inference) and goals, and the limited skills to interpret them, particularly among non-MEL staff.

To counter these, we provided technical training carefully targeted to non-technical audiences. It is hard to do without that, although doing adapted and effective trainings requires a lot of effort and attendance can be sporadic without the right incentives in place.

We also organised regular discussions between MEL and non-MEL staff about the interpretation of findings and cultivated a user-centred approach, involving non-MEL programme team members in the iterative design of MEL tools and outputs (such as templates for MEL plans, findings briefs or dashboards).

With regards to psychological capability, one of the most common problems we found is the strong tendency to give more weight to data that confirm prior beliefs (i.e. confirmation bias) when interpreting MEL data and not sufficiently questioning the programme.

In order to promote learning and objectivity in data interpretation, therefore, we planned for pre-defined moments of reflection facilitated by MEL staff at the end of a project or project phase and for pre-defined decisions to be taken based on findings. In practice, fully defining decisions upfront is difficult, but to the extent possible, our experience suggests that at least clear targets should be set for what will be satisfactory and what will call for changes.

In addition, to raise awareness among staff about their cognitive biases, we are considering the possibility of organising explicit training on behavioural biases.

Two key takeaways emerge from our efforts described above to tackle the ‘Capability’ problems:

- First, data require interpretation to be relevant and actionable. It is best conducted as a joint effort, bringing together different perspectives (e.g. programmatic and MEL). This requires many stakeholders to have a certain minimal technical understanding of the data and of core MEL concepts.

- Second, learning is as much about doing new things as about overcoming (hard-wired) biases. Confirmation bias, resistance to change, positivity bias and others can lead us to misinterpret data, or even inhibit us from seeking evidence in the first place. Directly targeting biases may well be an overlooked area in strengthening organisational learning capacities.

Creating opportunity – or an enabling environment – for change

Tackling individuals’ MEL capability problems within an organisation is not sufficient to create a learning organisation. An enabling environment is needed to provide the opportunity for individuals to use their MEL knowledge and skills. The COM-B framework suggests that this encompasses both the physical opportunity (e.g. funds and time) and the social opportunity (e.g. the prevailing culture, norms and values).

In terms of physical opportunity, we find that the biggest and most perennial question on the resourcing side is that of how to budget for MEL. Budgets are seldom considerable. Sometimes blanket rules are provided, which typically range from two to five per cent. Instead, we tried to establish rules of thumb for MEL budgeting based on the level of maturity of the interventions: while even two per cent might be enough in the context of a proven programme operating at a very large scale, the optimal MEL spending for a small-scale innovative pilot, where learning ought to be the primary goal, could well be over 30 per cent of the total budget.

Another typical problem we face is the lack of time for programme staff to engage with MEL: managing the day-to-day priorities often end up taking precedent. To counter this, we developed data-use plans at the MEL planning stage, setting out how each piece of data will be used and when, and aligning this with existing organisational routines to avoid additional burden.

Finally, to improve decision-making processes, we tried to adopt user-centred approaches when sharing results, making it easy to base decisions on results and, for funders specifically, we developed proposal/reporting templates that ask for key questions/lessons and encourage iterative adjustment of the indicators rather than set logframes.

With regards to social opportunity, a characteristic challenge is that the organisation does not value iterative learning (including recognition of failures) over making something look good to please leadership, funders or other stakeholders.

We find that efforts to create a wider organisational culture that enables learning often starts with leadership which defines a clear organisational strategy that sets the tone regarding the learning needs, and invests accordingly. More granular efforts can follow: for example, holding regular internal ‘brownbag’ sessions (with engagement from leadership) which focus on the learning journey of an intervention can be helpful as these sessions help to make learning feel less abstract and out of reach. Providing training and coaching to MEL staff on how to give feedback constructively to encourage discussions around failure can also be helpful.

Funders who genuinely prioritise learning, and match this with relevant resources and ways of working with their grantees, may be able to unblock the route to proper organisational learning.

Finally, one way to build momentum that we saw succeed in one large INGO, was to start with investing in creating a strong positive ‘exemplar’ within one programme to test an improved approach to MEL and showcase the process and benefits to the rest of the organisation. There was a deliberate effort to involve influential staff members from throughout the organisation in the development of this exemplar through a steering committee. This ensured that they had a close understanding and ownership of the elements that had made this exemplar happen, which enabled their almost seamless adoption throughout the organisation.

In tackling these ‘Opportunity’ problems, a couple of key messages emerged for us:

- First, while concrete tools and processes help to mitigate the tendency to deprioritise MEL among staff, the importance of making learning a core part of the strategy with leadership back-up should not be underestimated.

- Second, creating social opportunities across the organisation can face resistance and be a slow process. A key aspect is to make learning as achievable and concrete as possible, by showing what that looks like within the organisation.

In this last section, we focus on our insights on efforts to implement the third enabling element of the COM-B framework – namely motivation – for organisational learning to take place.

Igniting motivation for learning

For this last component, we draw on the framework’s distinction between automatic motivation and reflective motivation. We conceptualise the first as the motivation to work with the learning tools and processes because you are directed to do so, and the second as stemming from the intrinsic belief in value of MEL to the work and to the organisation.

In automatic motivation, the major problem we see in our work is that individuals do not easily engage in iterative learning (including embracing failures) but rather are more inclined to make something look good. This is even more acute in the philanthropic and nonprofit sectors given that rewards are much harder to align with success metrics that are credible.

We find that ways to overcome this are to include learning objectives in performance reviews for team members, in budget planning, and to frame project goals around solving problems rather than ‘coming up with the right version of our solution’ to put the focus on the problem and to creates space to think outside of the box of an existing solution.

With respect to reflective motivation, we find that it can be challenging for individuals to see the benefits to themselves and their work of changing their ways of working. To help to overcome this, ensuring individuals who join the organisation have the right mindset can be helpful (for example, by testing for a learning mindset when recruiting new staff). We also find that it is helpful to meaningfully engaging with programme staff at the MEL planning stage by, for example, having them set the questions that will be answered, and then continually refer to their questions in sharing results.

In reflecting on the motivation challenges we have faced, two key considerations emerged for us:

- First, reflective motivation needs to be supported by automatic motivation: carrots and sticks to promote learning.

- Second, we have not come up with many concrete ways to promote reflective or intrinsic motivation. In a sense, reflective motivation – or the intrinsic belief in the value of MEL – is the holy grail and what we are ultimately aspiring to. We hope and expect that it would be gradually enhanced over the years with the other components in place.

Some conclusions

As often when addressing complex, multi-stakeholder behaviour, building learning cultures within organisations requires a multi-pronged approach. While aspects of opportunity and motivation often take more time and effort to achieve within an organisation, a solely capability-focused approach will not cut it.

Related to this is the importance of leadership support. By this, we mean the leadership within individual organisations, but also leadership shown by entire organisations in an ecosystem. Funders who genuinely prioritise learning, and match this with relevant resources and ways of working with their grantees, may be able to unblock the route to proper organisational learning. They therefore bear an important responsibility to enable learning to take place within a wider ecosystem. Many implementing organisations need to respond to incentives coming from funding. If those incentives truly prioritise and enable learning, a real shift in the ecosystem is possible to collectively build towards better results.

Joachim Krapels is Chief Science Officer in the Effective Philanthropy Group at Porticus

Email: j.krapels@porticus.com

Julie Bélanger is Director at Better Purpose

Email: julie.belanger@betterpurpose.co

Loïc Watine is Director of Right Fit Evidence Advisory Unit at Innovations for Poverty Action

Email: lwatine@poverty-action.org

This article has been made free to read. Download a PDF of it here.

Comments (0)